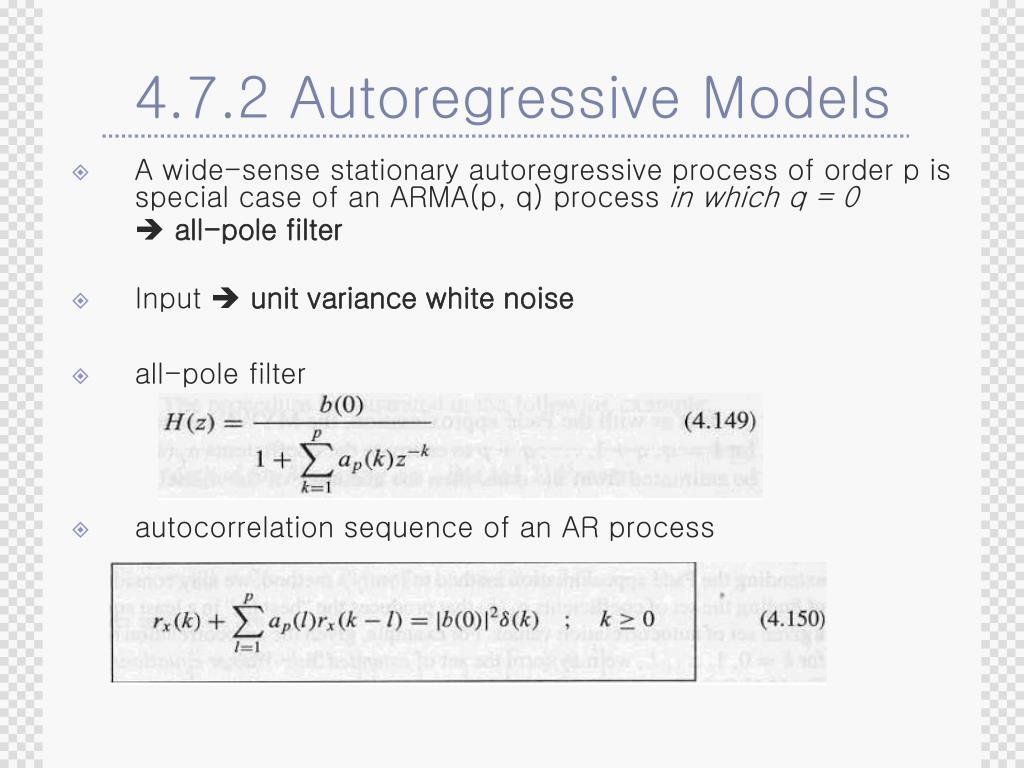

It selects a reduced set of the known covariates for use in a model. Lasso was introduced in order to improve the prediction accuracy and interpretability of regression models. The LASSO is closely related to basis pursuit denoising. Lasso's ability to perform subset selection relies on the form of the constraint and has a variety of interpretations including in terms of geometry, Bayesian statistics and convex analysis. Though originally defined for linear regression, lasso regularization is easily extended to other statistical models including generalized linear models, generalized estimating equations, proportional hazards models, and M-estimators. It also reveals that (like standard linear regression) the coefficient estimates do not need to be unique if covariates are collinear. We conduct a simulation study that shows that the Lasso-type GMM correctly selects the true model much. These include its relationship to ridge regression and best subset selection and the connections between lasso coefficient estimates and so-called soft thresholding. The asymptotic theory for our estimator is nonstandard. In this paper, we study the Lasso estimator for fitting autoregressive time series models. This simple case reveals a substantial amount about the estimator. CiteSeerX - Document Details (Isaac Councill, Lee Giles, Pradeep Teregowda): The Lasso is a popular model selection and estimation procedure for linear models that enjoys nice theoretical properties.

Lasso was originally formulated for linear regression models. J Multivar Anal 102(3):528549 Tibshirani R (1996) Regression shrinkage and. (2020) derived some consistency results of OLS-based estimators with LASSO penalization for -mixing Gaussian VAR processes. It was originally introduced in geophysics, and later by Robert Tibshirani, who coined the term. Rinaldo A (2011) Autoregressive process modeling via the Lasso procedure. Therefore, an iteratively reweighted adaptive lasso algorithm for the estimation of time series models under conditional heteroscedasticity is presented in a high-dimensional setting. Section 3 presents the gas flow network data on an. However, currently lasso type estimators for autoregressive time series models still focus on models with homoscedastic residuals. Rinaldo Abstract The Lasso is a popular model selection and estimation procedure for linear models that enjoys nice theoretical properties. In statistics and machine learning, lasso ( least absolute shrinkage and selection operator also Lasso or LASSO) is a regression analysis method that performs both variable selection and regularization in order to enhance the prediction accuracy and interpretability of the resulting statistical model. Section 2 details the NNAR model and parameter estimation procedure using the profile least square method. Autoregressive process modeling via the Lasso procedure Y. For other uses, see Lasso (disambiguation). This article is about statistics and machine learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed